How to Price Test

16+ tips from scaling a $50m+ app

Hey there, it’s Jacob at Retention.Blog 👋

I got tired of reading high-level strategy articles, so I started writing actionable advice I would want to read.

Every week I share practical learnings you can apply to your business.

Retention.Blog is sponsored by Botsi

Botsi is AI powered pricing and personalized offers for your subscription app.

One of the most impactful levers is always price

The price of your app is intimately connected to your success and ability to grow

Also, this is called Retention.Blog, so we can’t forget about the retention impact of price.

One of the best ways to get someone to stick around and use your app is convincing them to pay!

How do you convince them to pay? Show them the right price.

It’s not true in 100% of cases, but generally, your paying users always have better retention than free users.

Correlation vs causation - did paying cause them to stick around? Or did they see a lot of value and it aligned with their needs so they paid?

Realistically, it’s a bit of both.

Psychologically, by paying, you’re making a commitment to use something. Otherwise, why would you have paid?

What can you try?

Michal Parizek of Mojo wrote a blog post for Botsi, and recorded a podcast for Price Power about all the learnings from his work.

I learned so much from Michal, and I’m pretty sure you will too.

From Michal:

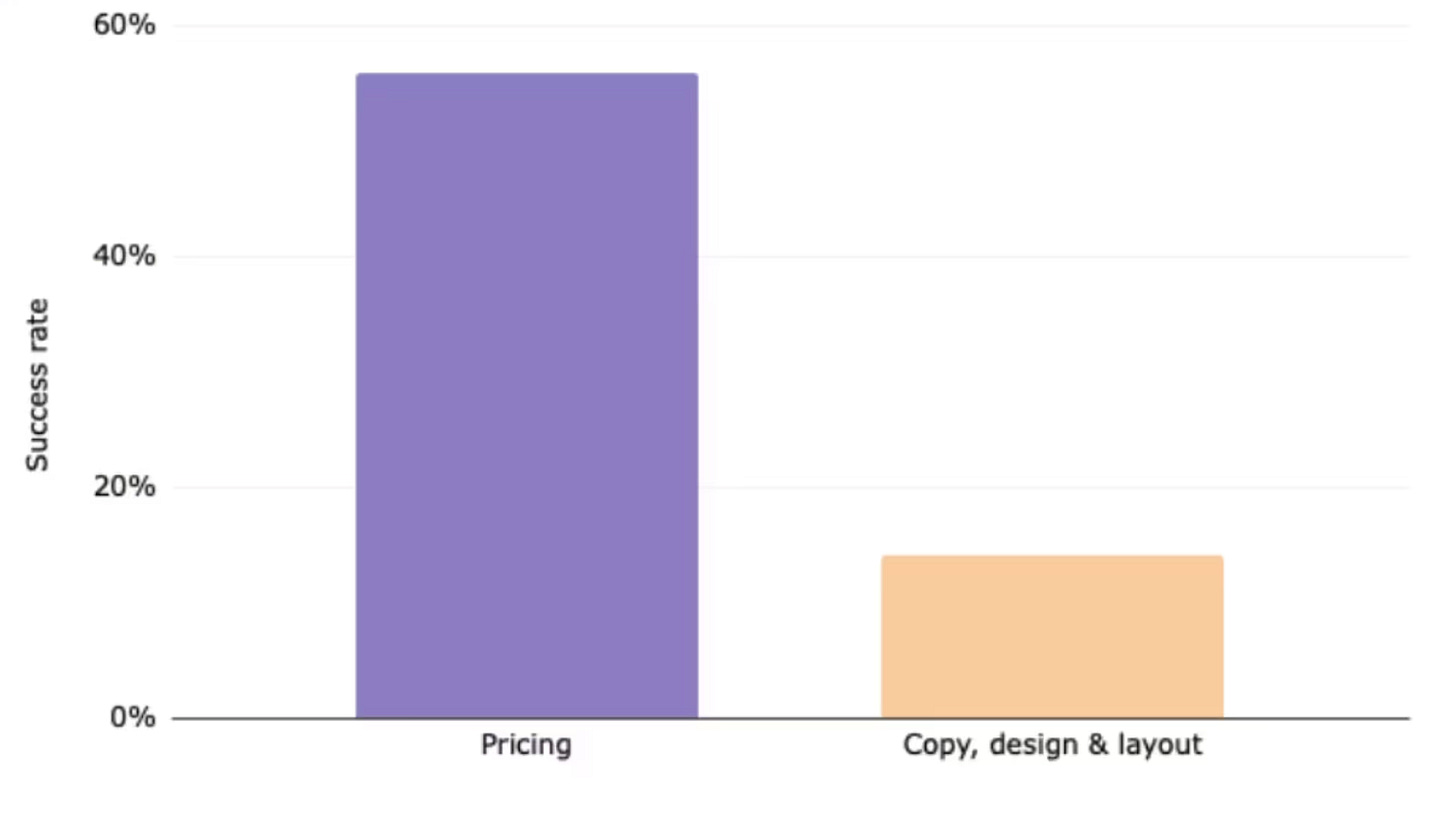

Our success rate for pricing experiments was 56%, compared to only 14% for design, copy, and layout tests. That difference alone explains why we’ve prioritized pricing over everything else. In fact, we ran nearly 40 pricing experiments in the past two years

Listen or watch on Youtube, Spotify, and Apple Podcasts:

What is the right sequence of testing and optimization?

Step 1: Get the price right first

Start with your top markets individually (not a global average).

Look at competitor pricing, your conversion funnels, and UA costs per country. If Brazil is way off, fix Brazil. Localization isn’t a later step, it’s part of getting the baseline price right.

This matters more based on your country mix, of course. If you’re heavily concentrated in one country, focus there, and come back to localization later.

But there are likely 3-6 countries that are important for you, understand what the right price should be in each of them

Step 2: Optimize packaging / subscription duration.

Once you’re confident the price is roughly correct, figure out which plan length (yearly, monthly, weekly) drives the most long-term value, and then optimize share toward that plan.

This includes testing the ratio between your yearly and monthly prices.

Michal says that adjusting the monthly price (while holding yearly constant) can dramatically shift behavior toward annual plans.

One of the biggest wins you can do for your app is increasing the percentage of people purchasing annual plans, and making the monthly plan higher can increase the perceived value of the annual plan.

Step 3: Tweak prices with finer precision.

After packaging is set, come back and do more targeted price adjustments.

Try tighter increments now that you know the rough range and the right plan structure.

Your ability to do this well will be dependent on user volume. If you have lower volume, probably skip this until later.

Step 4: Introductory offers and trial optimization.

Test free trial vs. no trial, trial length, selective trials by plan or region, and paid trial options.

Step 5: Paywall design and layout.

Michal was pretty direct that design/copy/layout tests were the lowest priority.

His experience showed only a 14% success rate on those compared to 56% for pricing tests.

That said, the most impactful layout tests were ones that effectively influenced packaging (like hiding the monthly plan behind “View all plans”).

FYI, this isn’t strictly a linear process.

But the core message was: don’t start with paywall redesigns when you haven’t validated your prices and plan structure first.

Image from: Pricing Experiments: The Backbone of Mojo’s Monetization Success

What are other tips and tests we can try?

1. Hide Secondary Plans Behind a “View All Plans” Button

Put the monthly plan behind a separate screen so the yearly plan appears to be the only option.

At Mojo this shifted yearly plan share from roughly 60% to 80% with no meaningful drop in overall conversion. The monthly plan still serves as a decoy that makes the yearly plan look cheaper.

2. Test Wide Price Spreads First, Narrow Later

Don’t test $48 vs $50 vs $52.

Start with big gaps like $40 vs $60 vs $80 to find the rough sweet spot, then run follow-up tests with tighter increments.

3. Use 7-Day Cancellation Rate as an Early Renewal Proxy

Over 40% of subscription cancellations happen in the first 7–10 days.

Track 7-day cancellation rate per variant and use it to project renewal rates and 13-month revenue. This lets you call a winner weeks earlier rather than waiting months for true renewal data.

Look at your historical data for the rate of users turning off auto-renew in the first 2 weeks - 1 month

Then look at the ending renewal rates for that same cohort of users

This should get you ratio of subscription cancellations within the first X days to users who renew at 1 year

Then, you can take this and project out for each variant of the users who converted, who will still be paying at year 1

4. Project 13-Month Revenue, Not Just New Revenue

For each test variant, combine new revenue with projected renewal revenue through month 13.

This allows for 1 cycle of annual renewals to be accurately compared with monthly plans.

Michal shared a case where a higher price point won on short-term new revenue but lost on 13-month projections because of elevated cancellation rates. The moderate increase was the actual winner.

5. Test Aggressive Price Increases

Mojo raised yearly prices 50% in the US and Germany with minimal conversion impact. They even tested 100% increases.

But don’t do it blindly, research the market and your conversions rates.

Michal felt this would be okay because prices hadn’t been updated in 2–3 years, competitors charged significantly more, and conversion benchmarks were above average for the category.

6. Localize Prices by Market (Don’t Trust Apple’s Auto-Tiers)

Apple just converts on exchange rates with no purchasing power adjustment.

Research competitor pricing per geo, compare conversion funnels across countries, and factor in local UA costs.

When Mojo dropped prices 25% in Mexico, they saw a 25% lift in new revenue.

7. Anchor the Yearly Price with a Monthly Equivalent

Add a simple line like “equivalent to $X/month” next to the yearly price.

This was a low test at Mojo that had an outsized impact, especially in lower purchasing power regions.

8. Optimize the Yearly-to-Monthly Price Ratio

Many apps default to a 1:7 ratio (e.g., $10/month vs $70/year).

Mojo found that a 1:4 ratio drove significantly more yearly subscriptions while holding overall conversion steady.

Will that ratio be the right one for you? It all depends on your monthly renewal rates.

Dan Layfield has some good thinking here on pricing ratios.

9. A/B Test Free Trial vs. No Trial

At Mojo, removing the free trial for new users hurt conversion.

Test it for your app - one could end up being better for you.

Also, test free trial on an annual, but maybe not on monthly.

10. Test Trial Length: 3-Day vs 7-Day

Mojo found virtually no difference in trial conversion between 3-day and 7-day trials, so they shipped the shorter one because it reduces revenue delay and improves UA payback periods.

11. Offer Trials Selectively by Plan and Region

Mojo only offered free trials on yearly plans (not monthly) as another lever to push annual adoption.

But in Brazil and Mexico, removing the monthly trial backfired. Those markets had the highest share of monthly subs and valued trials more.

Regional exceptions matter more the bigger you get.

12. Test Paid Trials (e.g., 30 Days for $5–10)

At the time of recording, Mojo was actively testing offering users a choice: a short free trial or a longer paid trial (like 30 days for $5–10).

This has started being called, “Design your trial”

One note, it does complicate analysis and requires enough volume to evaluate properly.

13. Use Data-Triggered Discounts Instead of Broad Seasonal Sales

Mojo’s broad Black Friday discount was net negative for new users (who already had high intent) and underperformed in high-purchasing-power markets like the US.

It only worked for existing free users and lower-income regions.

If you’ve run a broad sale campaign in the past, dig into the data about who actually converted, and where the lifts came from compared to your baseline.

Unfortunately, this data is hard to get without actually running a broad sale campaign first one year, and the learning for the next sale.

14. Discount Based on User Lifecycle Stage

By analyzing conversion by user age, Mojo found a clear drop-off around day 30.

Offering a 30% discount at that point boosted revenue per user by 87%.

A second trigger at day 90 with a 50% discount also performed well.

15. Segment Results by Traffic Source

Michal emphasized checking whether your UA channel mix (Meta vs TikTok vs organic) shifted during a test, since different sources bring users with very different intent and price sensitivity.

If your traffic distribution changed mid-test, that can explain weird results.

16. Re-Validate Cohorts 3–6 Months Later

Don’t assume a winner at day 14 stays a winner.

Go back and check those cohorts at 3 and 6 months to see if LTV projections held. Monthly renewal patterns and annual cancellation rates can shift over time.

This one is so important. You make predictions, but you need to monitor real behavior and continually be re-validating your assumptions!

Thank you, Michal!!

This is years of learnings and experiments, so we’re grateful to be able to learn from him.

You can go read the full blog post here:

https://www.botsi.com/blog-posts/pricing-experiments-the-backbone-of-mojos-monetization-success

And you can watch or listen to the full podcast below on Youtube, Spotify, and Apple Podcasts:

Want to get some help with your price optimization?

Botsi is AI-powered dynamic and personalized offers for your subscription app.

Botsi builds and trains custom AI/ML models on your app and your paywalls, to figure out the right offer to maximize LTV for each user.

It doesn’t only sound cool, it works! (and is 100% compliant with Apple and Google)

Botsi combines device data (OS, model, country, etc) with your custom user data, and attribution data, to get high quality signal about the likelihood to pay and convert.

📣 Want to help support and spread the word?

Go to my LinkedIn here and like, comment, or share my posts.

OR

Great insights Jacob and Michal, I really appreciate it.